Tech Node 927779663 Neural Matrix

Tech Node 927779663 Neural Matrix presents a modular framework that fuses neural processing with scalable hardware to accelerate inference. It emphasizes coherent feedback loops, cross-core integration, and heterogeneous substrates for adaptable performance. The approach promises measurable throughput and stable latency, with disciplined evaluation through objective metrics and phased pilots. For organizations, the question is how to define baseline, governance, and continuous monitoring to realize business-aligned gains and justify further investment.

What Is Tech Node 927779663 Neural Matrix and Why It Matters

Tech Node 927779663 Neural Matrix represents a cutting-edge framework for integrating neural processing with scalable hardware architectures, enabling accelerated inference and adaptable computation across diverse workloads.

The neural matrix concept centralizes modularity, enabling rapid reconfiguration while preserving stability.

Its emphasis on scalable hardware performance adaptability positions it as a strategic tool for research and industry seeking flexible, high-efficiency AI systems.

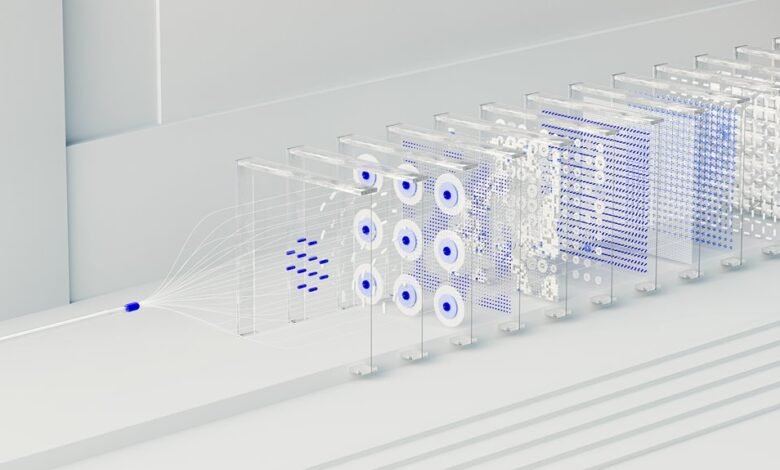

How Neural Matrix Blends Neural Architecture With Scalable Hardware

neural Matrix blends neural architecture with scalable hardware by tightly coupling modular neural modules to adaptable computing substrates, enabling dynamic reconfiguration without sacrificing stability.

The approach emphasizes neural integration across heterogeneous cores, supporting hardware scalability while preserving robust performance.

Continuous learning emerges through coherent feedback loops, driving energy efficiency and resilience.

This design enables freedom-driven experimentation within rigorous architectural constraints and scalable deployment.

Real-World Capabilities: Performance, Adaptability, and Efficiency

The real-world capabilities of Neural Matrix emerge from its integration of modular neural components with adaptable hardware substrates, enabling measured performance gains across diverse workloads.

This configuration supports scalable performance and adaptive efficiency, aligning with dynamic demands while preserving stability.

Analytical evaluation indicates consistent throughput improvements, predictable latency, and resource-conscious operation, underscoring a design oriented toward flexible, resilient deployment within varied environments.

Freedom-minded rigor persists.

Getting Started: Evaluating, Deploying, and Measuring Impact

How should organizations begin with Neural Matrix to assess value, deploy effectively, and quantify impact? Stakeholders define objective evaluation metrics, align with business goals, and establish baseline measurements.

Adoption proceeds through disciplined deployment strategies, phased pilots, and rigorous validation.

Continuous monitoring, transparent reporting, and impact analysis enable disciplined optimization, ensuring freedom to iterate while safeguarding governance, risk, and measurable return on investment.

Conclusion

The Neural Matrix stands as a loom where threads of computation and hardware interweave, forging a fabric capable of rapid reconfiguration without fraying. Its modular skein mirrors a compass, guiding systems through diverse workloads with disciplined, measurable threads of latency and throughput. As pilots unfold and metrics tighten, the architecture reveals a stable heartbeat beneath flux, translating abstract efficiency into tangible value. In this symmetry, innovation and reliability align, signaling disciplined progress rather than mere acceleration.